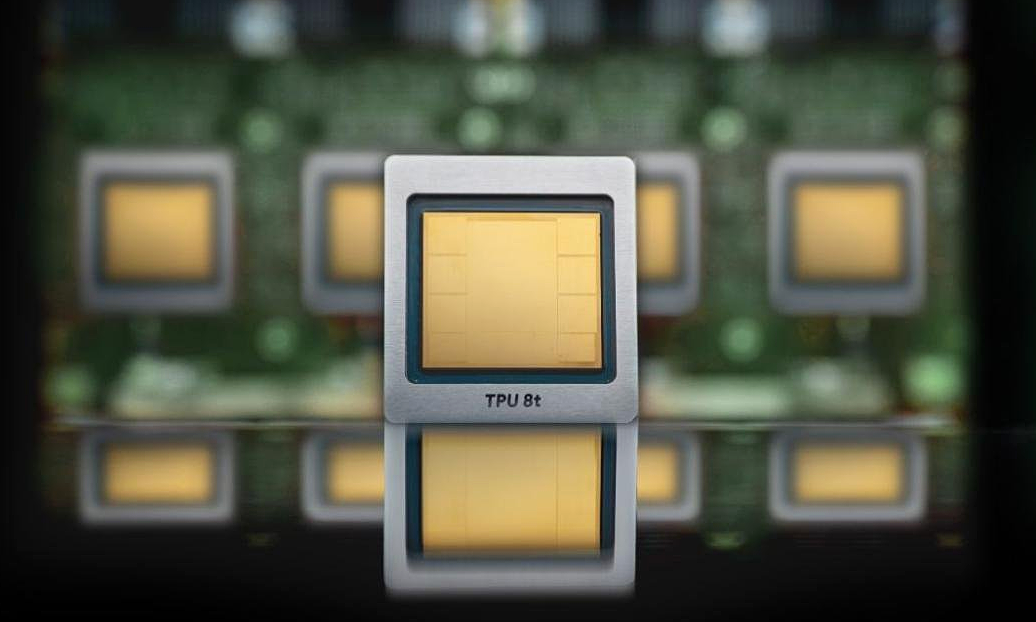

Google announced two versions of its tensor processing unit (TPU), including the TPU 8t designed for AI model training, and the TPU 8i for inference.

According to Google, the 8t TPU is a “powerful super trainer chip”, specifically designed for processing high-throughput AI workloads. Computing performance is also nearly three times higher than previous generations.

Specifically, TPU 8t integrates 9,600 chips in a single super cluster, providing 121 exaflops of computing power (one trillion floating-point operations per second, which is the highest computing power threshold today) and two petabytes of shared memory linked together via high-speed inter-chip interconnect (ICI). Doubled ICI bandwidth ensures even the most complex models achieve near-linear scalability and maximize system performance.

“Now, we can shorten training time from months to weeks with the power of more than a million TPU chips in a single server cluster, coordinated by Pathways and JAX,” said a Google representative.

Two chip models TPU 8t (left) and TPU 8i. Image: Google

Meanwhile, TPU 8i is Google’s breakthrough inference system for inference and reinforcement learning (RL). The chip delivers ultra-low latency for AI agent-based workflows and Mixture of Experts (MoE). By tripling the on-chip SRAM memory to 384 MB and increasing the high-bandwidth memory (HBM) to 288 GB, the chip is said to break the memory barrier, caching memory entirely on chip.

TPU 8i also doubles ICI bandwidth to 19.2 Tb/s, reducing ICI network diameter by more than 50%. The company also introduced a new acceleration engine called Collectives Acceleration Engine (CAE) that reduces on-chip latency by up to 5 times, minimizing latency in multiple tasks running simultaneously. With this design, TPU 8i delivers 80% better performance per dollar than the previous generation for inference, enabling fast, interactive and cost-effective user experiences.

Google said the two new TPU chips are expected to be integrated into Google Cloud data center systems soon. However, the company has not released a detailed roadmap.

Theo TechCrunchwith a series of impressive parameters, two new chip models could be a threat to Nvidia in the field of chips for training AI models. However, this site also believes that it is likely that Google will not directly attack Jensen Huang’s company, or at least not yet, especially since the two are in a partnership.

Like Microsoft or Amazon, Google now uses self-developed chips to supplement computing power for existing Nvidia-based systems in its infrastructure, rather than trying to completely replace them. Late last year, Google CEO Sundar Pichai promised that its cloud service would have Nvidia’s latest Vera Rubin chip. This became a reality when, in its announcement on April 23, the company also introduced the A5X physical server equipped with the Vera Rubin NVL72 chip.

In addition to the two new TPUs, Google also introduced a series of other products. Among them, the company launched the Axion N4A virtual machine equipped with Axion CPU based on self-developed Arm architecture; 4th generation Google Compute Engine virtual machines equipped with Intel and AMD CPUs based on x86 architecture; data center platform for AI tasks Virgo Network; Z4M virtual machine with high-capacity local SSD storage and RDMA for open parallel file system; or Google Kubernetes Engine (GKE) – for agent-based workload orchestration.

https://monrocasino-login.com/boni/aktionscode/

https://monrocasinoonline.at/login/

https://monrocasinoonline.at/app/

https://monrocasinoonline.at/boni/

https://monrocasinoonline.at/spiele/

https://monrocasinoonline.at/kontakt/

https://monrocasinoonline.at/datenschutz/

https://monrocasinoonline.at/boni/aktionscode/

https://betifybe.com/fr/

https://betifybe.com/app/

https://betifybe.com/bonussen/

https://betifybe.com/spellen/

https://betifybe.com/contact/

https://betifybe.com/privacybeleid/

https://betifybe.com/vip/

https://hotwinbe.be/fr/

https://hotwinbe.be/inloggen/

https://hotwinbe.be/app/

https://hotwinbe.be/bonussen/

https://hotwinbe.be/spellen/

https://hotwinbe.be/contact/

https://hotwinbe.be/privacybeleid/

https://inbetbe.be/fr/

https://inbetbe.be/inloggen/

https://inbetbe.be/app/