OpenAI has launched a new security feature that allows designate a trusted contact in ChatGPT such as a friend, family member or caregiver, to receive a notification if the assistant interprets that the user intends to harm themselves or faces a risk to their safety.

The company has clarified how some people use ChatGPT to, in addition to learning, exploring and solving problems, reflect on personal issues, including times when they are experiencing difficulties or seeking support.

In these situations, the assistant is designed to respond with empathy and encourage users to seek professional help when they need it. Now you can also alert people trusted by the user in question, if situations of risk to their security are identified.

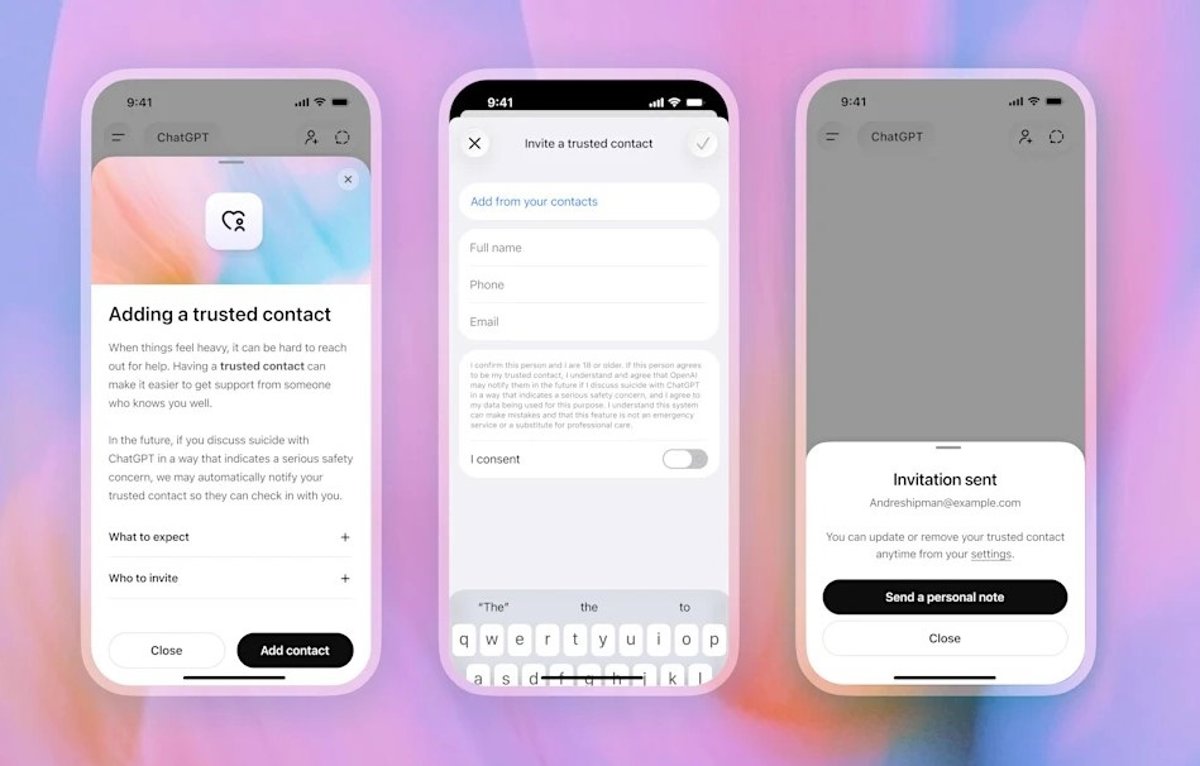

To do this, OpenAI has introduced a new Trusted Contact feature in ChatGPT, with which it allows adult users to select a person they trust, whether it is a friend, family member or caregiverso that it is notified if the assistant recognizes a behavior related to inflicting harm on oneself in a way “that indicates a serious risk to your safety.

This was detailed in a statement on his blog, where he explained that the trusted contact is designed to “offer an additional level of support“, along with the helplines already available on ChatGPT.

This option will work through the automated monitoring systems, that can detect if the user is talking about harm yourself or endanger your safety. In these cases, the OpenAI team of trained people reviews the situation and if they determine that the conversation may indicate a serious security problem, send a notification to a trusted contact.

That is, when ChatGPT identifies that the user is in a crisis situation, it will send an alert by email, text message or in-app notification If the trusted person has an account, so that they can intervene and offer greater support, promoting connection with someone the user already trusts.

This tool is based on the already available parental control security notifications option for minors. However, it must be kept in mind that it is not a substitute for professional care or crisis services. “ChatGPT will continue to encourage users to contact helplines for crisis or emergency services when necessary,” the technology company has assured.

The trusted contact must be of legal age and can be selected from the ChatGPT settings. After that, the designated person will receive an invitation where their role will be explained and they must accept it within a week. If the trusted contact rejects this invitation, the user can appoint another person.