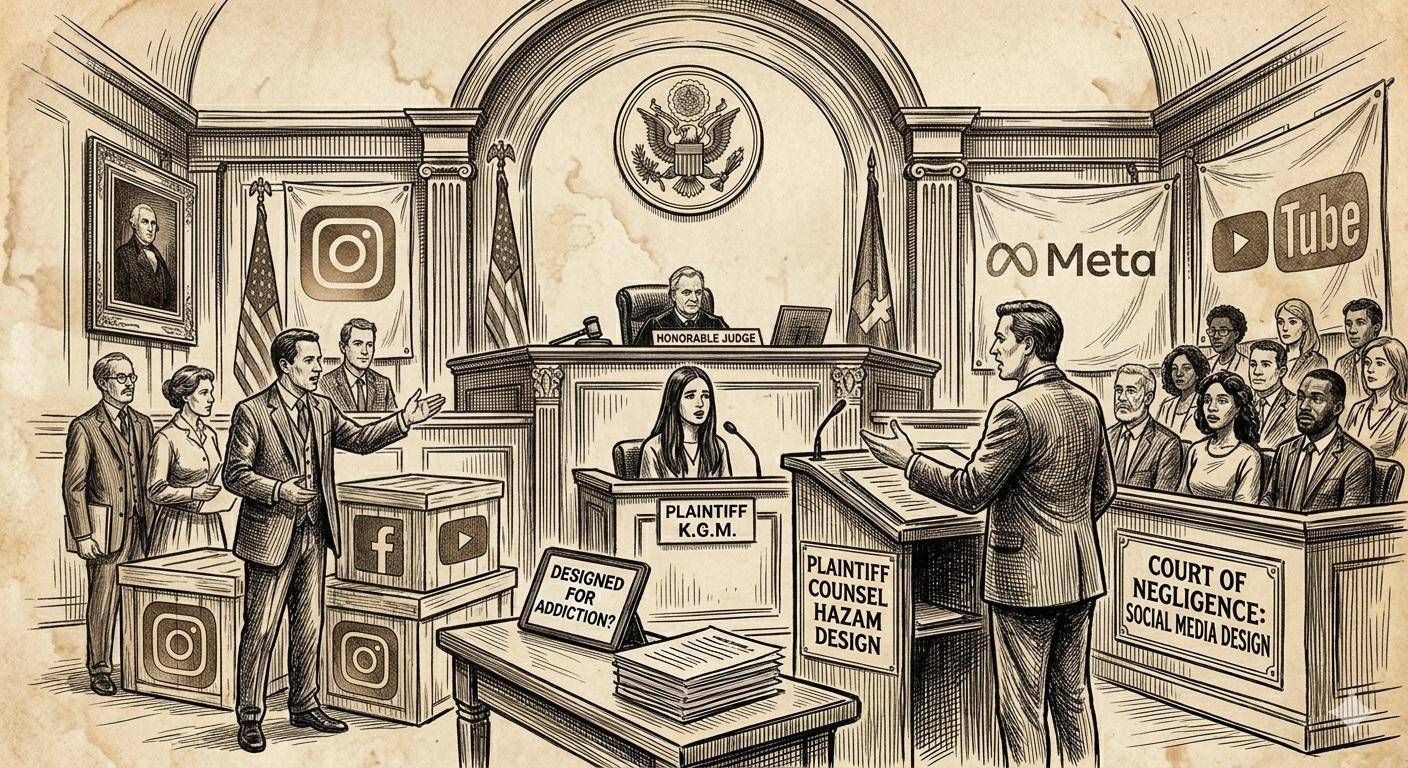

A Los Angeles jury has issued a ruling that will redefine the boundaries of liability for tech giants. The verdict establishes that the design features of some social platforms have been a “substantial factor” in causing damage to the mental health of a young user. The conviction requires the payment of $6 million in compensatory and punitive damages, shared between the companies involved, marking the first conclusion of its kind in a personal injury trial related to social media addiction.

During the hearing, the prosecution’s theory argued that company leaders were aware of the additive potential of their products. Testimonies from senior executives and analysis of internal documents revealed that security warnings raised by employees were allegedly ignored in favor of strategies aimed at maximizing engagement. “Today, a jury saw the truth and held Meta and Google responsible for designing products that are addictive and harm children“, said plaintiffs’ attorneys Lexi Hazam and Previn Warren. While YouTube expressed regret for the plaintiff’s suffering, Meta announced plans to explore legal options for an appeal.

Possible consequences in Europe

In Europe, this US ruling could act as catalyst for enforcement of Digital Services Act (DSA). Although the European legal system differs from the US one (based on Common Law), the DSA already imposes strict obligations on platforms for the protection of minors and the assessment of systemic risks related to mental health. A conviction for “negligent design” in the US could push the European Commission to intensify investigations into “dark patterns” and recommendation algorithms, potentially leading to administrative fines much higher than American compensation, calculated at up to 6% of the global turnover of the companies involved.

The case, known as a “watershed case” or decisive legal precedent, is the first of a consolidated series which sees over 1,600 signatories ready to bring their experiences to court. Non-profit organizations such as Mothers Against Media Addiction (MAMA) called the decision a “long overdue validation”, now urging the passage of laws that would impose native safety standards for digital products aimed at young people.